TapID: Rapid Touch Interaction in Virtual Reality Using Wearable Sensing

Can VR be used for extended periods of time? I’ve been thinking about what it would take for this to happen. Solving for vection(motion sickness) could be one thing. Perhaps a more comfortable headset form factor is another. I also think the input method is important. We commonly use controllers for VR interaction. Although these are immersive and intuitive for games or shorter interactions, they quickly become tiring and uncomfortable when used for extended periods of time.

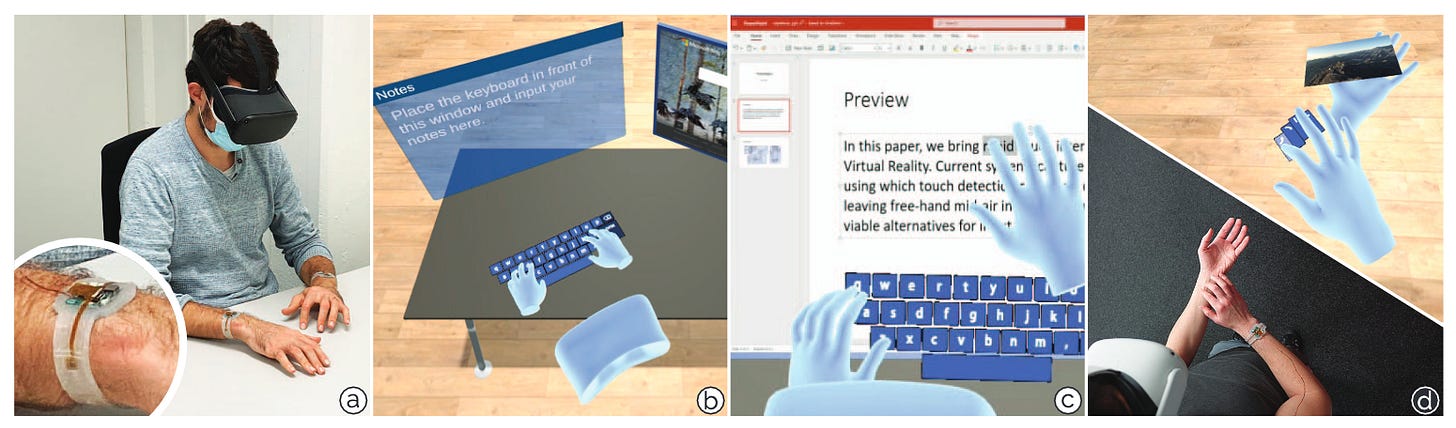

This paper shows us a way toward prolonged VR interaction. TapID takes advantage of passive surfaces (like a table or our arm) and our familiarity with interacting with 2D surfaces to create a new sensing method that makes inputs comfortable and familiar.

This Week’s Paper

Meier, Manuel, Paul Streli, Andreas Fender, and Christian Holz. 2021. “TapID: Rapid Touch Interaction in Virtual Reality Using Wearable Sensing.” In 2021 IEEE Virtual Reality and 3D User Interfaces (VR), 519–28.

DOI Link: 10.1109/VR50410.2021.00076

Summary

Design

TapID accurately detects when and where a tap is made on a surface, as well as which finger produced it. It does so by combining the VR headset’s built-in camera-based hand tracking with a custom wristband device that detects taps. TapID’s approach has relatively low latency (130ms) and can accommodate up to 600 input events per minute when using both hands. For context, a survey found an average typing speed of 50-60 words per minute.

The wristband device has two accelerometers on the wings of the PCB board and two on the board itself for testing and comparing. TapID distinguishes sharp spikes generated in the accelerometers as taps so that it does not incorrectly register other hand/arm movements. The authors trained a neural network to predict which finger produced the tap.

Once the wristband detects a tap and identifies the finger that tapped, this information is compared with the VR headset’s hand tracking system. The hand tracking system determines whether the tip of the detected finger is near a surface (within 30mm). If it isn’t, then TapID rejects the tap input. To detect taps on arm surfaces, the authors approximated the arm area as 40mm radius from a tracked hand joint. The tap location is also determined by the hand tracking system.

Results

The researchers conducted an experiment to understand tap detection and finger identification accuracy. The participants simply had to repeat 30 taps on each finger and do the same on the other hand. This whole procedure was then repeated another 3 times. The authors recorded a surface acoustic signal using a stethoscope taped to the tabletop for ground truth. To evaluate the generalizability of their wrist sensing method, the authors also ran this study using fitbit’s (2 accelerometers on the rigid PCB) and a smartwatch’s (1 accelerometer) sensor configuration. I think the results are rather promising:

Tap event detection is very accurate and suitable for practical purposes, with F1 scores for all 3 sensor configurations as 0.996.

Finger identification is less so. Cross-person classification accuracy was 0.87.

However, fine-tuning with just 10 samples from the user increased the accuracy to 0.91.

Distinguishing the middle and ring finger from each other is the most challenging, whereas distinguishing thumb and pinky taps are most accurate.

The accuracy of cross-person finger classification without refinement increases when the authors only evaluated people with average wrist size, suggesting that including more training data with different wrist sizes can improve accuracy.

Related Work

There have been multiple pursuits toward minimally intrusive finger identification during touch. However, many of these methods trade convenience (e.g. more uncomfortable sensor placement) for accuracy or vice-versa. Here are some examples:

Myo band worn on the elbow to detect fingers using electromyography signals. Within user accuracy was .75 but between users it reduced to .50 (Touchsense: classifying finger touches and measuring their force with an electromyography armband)

Tapping fingers are detected using piezoelectric transducers placed on each finger. This means the system supports multi-touch. (WhichFingers: Identifying Fingers on Touch Surfaces and Keyboards using Vibration Sensors)

On-skin interactions using a combination of RF waveguide to track taps and computer vision to track the finger position. (ActiTouch: Robust Touch Detection for On-Skin AR/VR Interfaces)

As for detecting touch, most related work also produced relatively unreliable results. Camera-based methods can detect touch locations accurately but struggle with detecting whether a touch has occurred. They either detect extra touches or miss them. Here are some examples:

Using the opti-track system to evaluate typing performance in VR. This is currently the most precise method for typing detection. (Physical Keyboards in Virtual Reality: Analysis of Typing Performance and Effects of Avatar Hands)

Using Microsoft’s Hololens and infrared cameras to detect touches. They had a 3.5% rate of missed touches and 19% rate of extra touches. (MRTouch: Adding Touch Input to Head-Mounted Mixed Reality)

Technical Implementation

The contributions from this paper definitely include the extensive experimentation the authors did to find the best configuration for TapID. I will highlight the key categories explored in TapID for brevity:

Hardware: Low-powered accelerometers were used: LIS2DH by STMicroelectronics. These were embedded through a flexible PCB. The board itself housed a DA14695 (DialogSemi) System-on-a-Chip.

Neural network setup: The paper details the hyperparameters and details of the neural network, but TapID essentially trained a CNN using supervised learning for finger identification.

Headset: Oculus Quest. The hand tracking system was using the Quest’s built-in SDK.

Discussion

Why is this interesting?

I think the brilliance of TapID shines the more you think about it. Since the interaction is tapping, future application designs with this device can leverage the extensive research conducted in 2D interfaces. We are also more adapted to the tapping interaction and may find using it more efficient for productivity-related tasks in VR. The haptic feedback and temporary rest we get from passive surfaces is also an added benefit. Apart from benefits of this interaction method, TapID’s results also show that it is not person nor hand specific, fast, and precise (with promising room to grow, of course).

Limitations/Concerns

There are several limitations to TapID that we should note. For one, although the wrist-band itself is small, it is still serially connected to a PC and requires a GPU. TapID also currently requires the user’s hands to be within the headset camera’s field of view. This means that the user has to constantly look at their hands as they type instead of relying on proprioception. TapID also currently only detects single taps, it has not yet accommodated for multiple taps at the same time nor other finger gestures.

Opportunities

There are many opportunities to evolve TapID. For one, I would be interested in work that aims to expand the input types TapID accepts like swipes or multitap. I can also see TapID being integrated into other devices to support a wider variety of interactions. I would also be interested in user studies where participants use TapID to do productive work - is it as comfortable and effective as we make it out to be? How much time can a participant spend in VR with it?